CLA News / A Global Perspective on the Challenges and Innovation within Judicial Governance and the Role of AI By Steven Thiru, President, Commonwealth Lawyers Association

“AI is now a given in all our lives, at work, in school, and at home. It is already ubiquitous, and will continue to become more indispensable. The genie has been let out of the bottle and we cannot put it back.”[1]

The Honourable Justice Aidan Xu, Judge of the High Court of Singapore (Judge in Charge of Transformation and Innovation, Singapore Judiciary)

Artificial Intelligence in Courts: Current Applications and Emerging Practice

Artificial intelligence (‘AI’) is no longer a distant promise; it is a present reality that is already shaping how our courts operate today. From case management to legal research and decision-support tools, AI is steadily transforming the administration of justice. The real question before us is no longer whether AI belongs in our courtrooms, but how we ensure that it serves the enduring values of fairness, transparency, and the rule of law.

A clear illustration of how firmly AI has taken root in the courtroom can be seen in recent developments within Tanzania’s Judiciary. The Tanzanian Judiciary has introduced AI-powered transcription and translation tools to improve court efficiency nationwide, as announced by Chief Justice Professor Ibrahim Hamis Juma.

Faced with the impracticality of hiring stenographers for its large judicial workforce — comprising Court of Appeal judges, High Court judges, and about 2,000 magistrates — the Judiciary has adopted AI to automate transcription. This allows judges to focus on listening and decision-making rather than administrative recording. Developed in collaboration with the Italian technology firm Almawave, the system has been trained on diverse Kiswahili dialects and Tanzanian English to ensure accurate multilingual functionality.[2]

Singapore offers another important example of judicial innovation. In his speech at the Sejong International Judicial Conference in September 2025, Chief Justice Sundaresh Menon explained that the Singapore courts have been experimenting with Harvey.AI to support users in the Small Claims Tribunals, where parties are self-represented.[3] Rather than replacing judicial decision-making, the technology is used to enhance access to justice through practical support functions, including on-demand translation of court documents into Chinese, Malay, and Tamil.[4]

It has also been used to summarise parties’ documents so that litigants can better understand each other’s cases and potentially resolve disputes earlier. The Singapore courts have further indicated that, in time, such tools may help self-represented persons to draft and file claims and defences, organise evidence, and prepare submissions — illustrating a measured and user-centred approach to AI deployment in the justice system.[5]

Judicial Responsibility and the Limits of Algorithmic Decision-Making

With the increasing integration of artificial intelligence into judicial processes, judges must exercise heightened caution to ensure that its use does not compromise judicial independence, procedural fairness, or the integrity of their decision-making.

Judicial decision-making is not a mechanical exercise; it requires moral reasoning, contextual understanding, and the careful balancing of competing rights and interests. If innovation is introduced without clear safeguards, it may inadvertently compromise due process, undermine confidence in judicial outcomes, and create perceptions that justice is being automated rather than thoughtfully adjudicated. In this regard, Chief Justice Sundaresh Menon has rightly cautioned that “the allure of AI and the possibility of ‘AI judges’ should not cause us to lose sight of the aspects of judging that remain, and should remain, a fundamentally human endeavour”.[6]

This concern is exemplified by tools such as the Correctional Offender Management Profiling for Alternative Sanctions (‘COMPAS’),[7] an algorithmic risk-assessment system designed to estimate the likelihood that a defendant will reoffend. Developed as part of a broader shift toward data-driven criminal justice, COMPAS assists courts and correctional authorities in decisions relating to bail, sentencing, and supervision.

By evaluating a range of personal and criminal history variables — including prior convictions, behavioural patterns, and questionnaire responses — the system generates a numerical risk score to provide a structured, evidence-based assessment of public safety.

Despite its intended neutrality, COMPAS has attracted significant scrutiny over fairness and bias. Empirical analyses have identified disparities in error rates across demographic groups, particularly in false positives and false negatives. In some instances, certain groups were more likely to be incorrectly classified as high risk despite not reoffending, while others were more frequently assessed as low risk despite subsequently committing new offences.

Scholars[8] have attributed these discrepancies, in part, to the underlying data and variables used, noting that socio-economic indicators and historical arrest patterns may reflect broader structural inequalities. Consequently, concerns have been raised that such systems may inadvertently replicate or reinforce existing biases within the criminal justice framework.

Further concerns arise in relation to the role of COMPAS within judicial decision-making and its implications for fairness and accountability. Although designed to support rather than replace judicial discretion, there is apprehension that decision-makers may place undue reliance on algorithmic outputs, potentially reducing complex human circumstances to simplified numerical scores. These issues continue to fuel debate on whether algorithmic tools can provide outcomes that are not only efficient, but also fair, explainable, and dependable within the justice system.

Establishing Normative Frameworks for the Responsible Use of AI in Courts

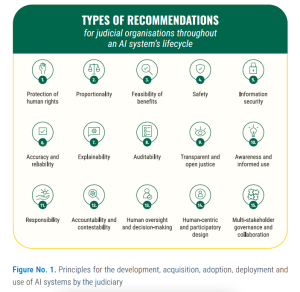

The UNESCO Guidelines for the Use of AI Systems in Courts and Tribunals[9] offer principles and specific recommendations for judicial organisations and individuals implementing AI systems, including generative AI. In this regard, ‘principles’ refer to general prescriptions intended to guide organisations and individuals in developing, acquiring, and using AI systems in an ethical manner that respects human rights.

The Guidelines set out 15 principles intended to guide organisations and individuals in the ethical development, procurement, and use of AI systems, with full regard for human rights. These principles encompass key considerations such as information security, auditability, and the preservation of human oversight and decision-making.[10] They also include specific recommendations directed at both judicial institutions and individual members of the judiciary, focusing on the actions to be taken at each stage of an AI system’s lifecycle. Ultimately, the Guidelines are designed to function as a reference point for the creation of tailored national and subnational frameworks.

In this respect, the Guidelines assume significance as an emerging international benchmark for the responsible use of AI within judicial systems. In an era marked by globalisation, where legal disputes, commercial transactions, and even evidentiary processes frequently traverse national boundaries, a degree of normative alignment is increasingly indispensable. The articulation of shared principles provides a common reference framework that can promote consistency, predictability, and mutual trust across jurisdictions.

The 15 Guidelines are illustrated below.

This emphasis on principled governance is also reflected at a domestic level in the Victorian Law Reform Commission’s (‘VLRC’) consultation on Artificial Intelligence in Victoria’s Courts and Tribunals. Similar to the Guidelines by UNESCO, the VLRC identifies the following eight guiding principles for the responsible use of AI in courts and tribunals:

- impartiality and fairness;

- accountability and independence;

- transparency and open justice;

- contestability and procedural fairness;

- privacy and data security;

- access to justice;

- efficiency; and

- human oversight and monitoring.[11]

In essence, the VLRC attempts to translate broad ethical concerns into principles tailored to the judicial context. It recognises that although AI may improve efficiency and enhance accessibility, such benefits cannot be pursued at the expense of fairness, equality, or the integrity of adjudication.

AI and the Legal Profession: Duties, Responsibilities and Risks

The Commonwealth Lawyers Association (‘CLA’) Declaration on the Use of AI provides a critical framework for lawyers.[12] Recognising both the transformative potential of AI and the unprecedented risks it poses, the Declaration calls on legal professionals and institutions to adopt a collaborative, transparent, and ethical approach to AI governance. By outlining seven overarching principles, the Declaration establishes a clear standard for ensuring that AI systems respect human life, rights, and ethical norms while remaining accountable and safe.

Foremost among these principles is the primacy and sanctity of human life. For lawyers, this underscores the imperative that AI should never compromise human well-being, whether through direct harm or by inaction that allows harm to occur. Legal professionals are uniquely positioned to enforce this principle by ensuring that AI systems used in judicial or advisory capacities remain under ultimate human control, thereby maintaining accountability in legal processes and decisions.]

Respect for ethical principles and human rights is another cornerstone of the CLA Declaration. AI systems must enhance, rather than infringe upon, individual freedoms, privacy, and dignity. Lawyers must advocate for the integration of equity and inclusion measures, ensuring that AI tools do not perpetuate discrimination or bias. Moreover, transparency and accountability mechanisms are essential, enabling oversight, explainability, and accessible remedies in cases where AI decisions impact people’s rights.

Closely linked to the protection of human rights is the principle of adhering to international privacy and data governance standards. Lawyers play a pivotal role in safeguarding personal information by enforcing robust data protection measures, securing informed consent for data use, and ensuring data minimisation. This responsibility is critical in legal practice, where sensitive information is handled daily, and where breaches or misuse could have profound consequences for clients and justice systems alike.

Security and safety in AI systems are equally critical. The CLA Declaration emphasises the need for “security by design”, proactive risk assessments, and emergency protocols to prevent and address potential harms. Lawyers, particularly those advising on compliance and risk, are integral to shaping policies that embed these safeguards into AI development and deployment, ensuring that systems operate reliably and ethically.

The emphasis on innovation and sustainability reflects the need to balance technological advancement with social and environmental responsibility. Legal professionals can guide the adoption of AI in ways that support sustainable development goals, encourage open innovation, and implement governance structures that are adaptable to the evolving AI landscape. By doing so, lawyers help ensure that AI contributes positively to society while remaining accountable and fair.

International collaboration and governance form another vital dimension. Lawyers often operate in cross-border contexts where harmonised standards are essential for consistency, trust, and cooperation. The CLA Declaration encourages multi-stakeholder engagement and global standards, providing a framework for legal professionals to support international efforts in AI research, development, and regulation while promoting cultural sensitivity and ethical universality.

Finally, the CLA Declaration highlights the importance of environmental stewardship. Lawyers can influence the design, procurement, and deployment of AI systems to minimise ecological impacts, ensuring that legal practice contributes to broader societal obligations, including environmental protection.

Taken together, the principles articulated in the CLA Declaration provide a comprehensive ethical compass for lawyers, reaffirming that AI, when deployed responsibly, can enhance the legal profession’s capacity to discharge its professional responsibility to uphold justice, protect rights, and serve the public good.

The Way Forward: Balancing Innovation and Justice

The conversation about AI in the judiciary is not binary; it is not a choice of resistance versus acceptance. It is about responsibility versus haste. While technology offers powerful tools to enhance efficiency, accessibility, and consistency, the administration of justice must remain firmly anchored in human judgment, ethical reasoning, and constitutional safeguards. Courts must therefore embrace innovation with both discernment and resolve, ensuring that every technological integration is guided by clear principles, robust oversight, and an unwavering commitment to fairness and accountability. Only through such a measured approach can we ensure that the rule of law endures — rather than yields — to the growing influence of technology in this age of artificial intelligence.

Steven Thiru

President

Commonwealth Lawyers Association

22 March 2026 (updated on 15 April 2026)

This is an updated version of a speech that was delivered by CLA President Steven Thiru on 22 March 2026 at the 1st Supreme Court Bar Association of India National Conference 2026 held on 21 and 22 March 2026 in Bengaluru, India, themed ‘Reimagining Judicial Governance: Strengthening Institutions for Democratic Justice’. Click here to watch him delivering the speech.

Steven Thiru records his appreciation to Jaishanker Sadananda and Allysha Anne Ronald for their assistance in preparing this speech.

[1] Xu, A. (2025, July 30). The use (and abuse) of AI in court. Speech at the IT Law Series 2025: Legal and Regulatory Issues with Artificial Intelligence. Singapore Judiciary. https://www.judiciary.gov.sg/news-and-resources/news/news-details/justice-aidan-xu–speech-at-the-it-law-series-2025–legal-and-regulatory-issues-with-artificial-intelligence

[2] Afriwise. (2024, May 6). Tanzanian judiciary introduces AI for enhanced efficiency. https://www.afriwise.com/blog/tanzanian-judiciary-introduces-ai-for-enhanced-efficiency

[3] Sundaresh Menon CJ. (2025, September 29). The role of technology in justice systems: a vision of accessible and collaborative justice. Speech at Sejong International Judicial Conference. https://www.judiciary.gov.sg/news-and-resources/news/news-details/chief-justice-sundaresh-menon—the-role-of-technology-in-justice-systems–a-vision-of-accessible-and-collaborative-justice

[4] Ibid.

[5] Ibid.

[6] Sundaresh Menon CJ. (2024, April 13). Judicial responsibility in the age of artificial intelligence. Keynote Speech at Inaugural Singapore-India Conference on Technology. https://www.judiciary.gov.sg/news-and-resources/news/news-details/chief-justice-sundaresh-menon–keynote-speech-at-the-inaugural-singapore-india-conference-on-technology

[7] Bradshaw, R. (2025, October 1). Interpreting COMPAS: Fairness metrics and human impact. Medium. https://medium.com/@rachaelannbradshaw/interpreting-compas-fairness-metrics-and-human-impact-b7cac35a98de

[8] Herrschaft, B. A. (2014). Evaluating the reliability and validity of the Correctional Offender Management Profiling for Alternative Sanctions (COMPAS) tool (Doctoral dissertation, Rutgers University). Rutgers University Community Repository. https://doi.org/10.7282/T38C9XX2

[9] United Nations Educational, Scientific and Cultural Organization. (2025). Guidelines for the use of AI systems in courts and tribunals. https://unesdoc.unesco.org/ark:/48223/pf0000396582

[10] United Nations Educational, Scientific and Cultural Organization. (2025, December 3). AI in the courtroom: UNESCO’s new guidelines for the judiciary. https://www.unesco.org/en/articles/ai-courtroom-unescos-new-guidelines-judiciary

[11] Victorian Law Reform Commission. (2024, October). Artificial intelligence in Victoria’s courts and tribunals. https://www.lawreform.vic.gov.au/wp-content/uploads/2024/10/VLRC_AI_Courts_CP_web.pdf

[12] Commonwealth Lawyers Association. (2025, April 9). Declaration on AI. https://www.commonwealthlawyers.com/wp-content/uploads/2025/05/CLA-Declaration-on-AI.pdf